Understanding the Integration Paths

When implementing Portkey with Open WebUI you can choose between two complementary approaches:- Direct OpenAI-compatible connection – ideal if you already rely on Portkey’s Model Catalog and simply need to expose those models inside Open WebUI.

- Portkey Manifold Pipe – our official pipe unlocks richer governance, metadata, and resilience features directly within Open WebUI.

If you’re an individual user just looking to use Portkey with Open WebUI, you only need to complete the workspace preparation and one of the integration options below.

1. Prepare Your Portkey Workspace

Create Portkey API Key

- Go to the API Keys section in the Portkey sidebar.

- Click Create New API Key with the permissions you plan to expose.

- Save and copy the key — you’ll paste it into Open WebUI.

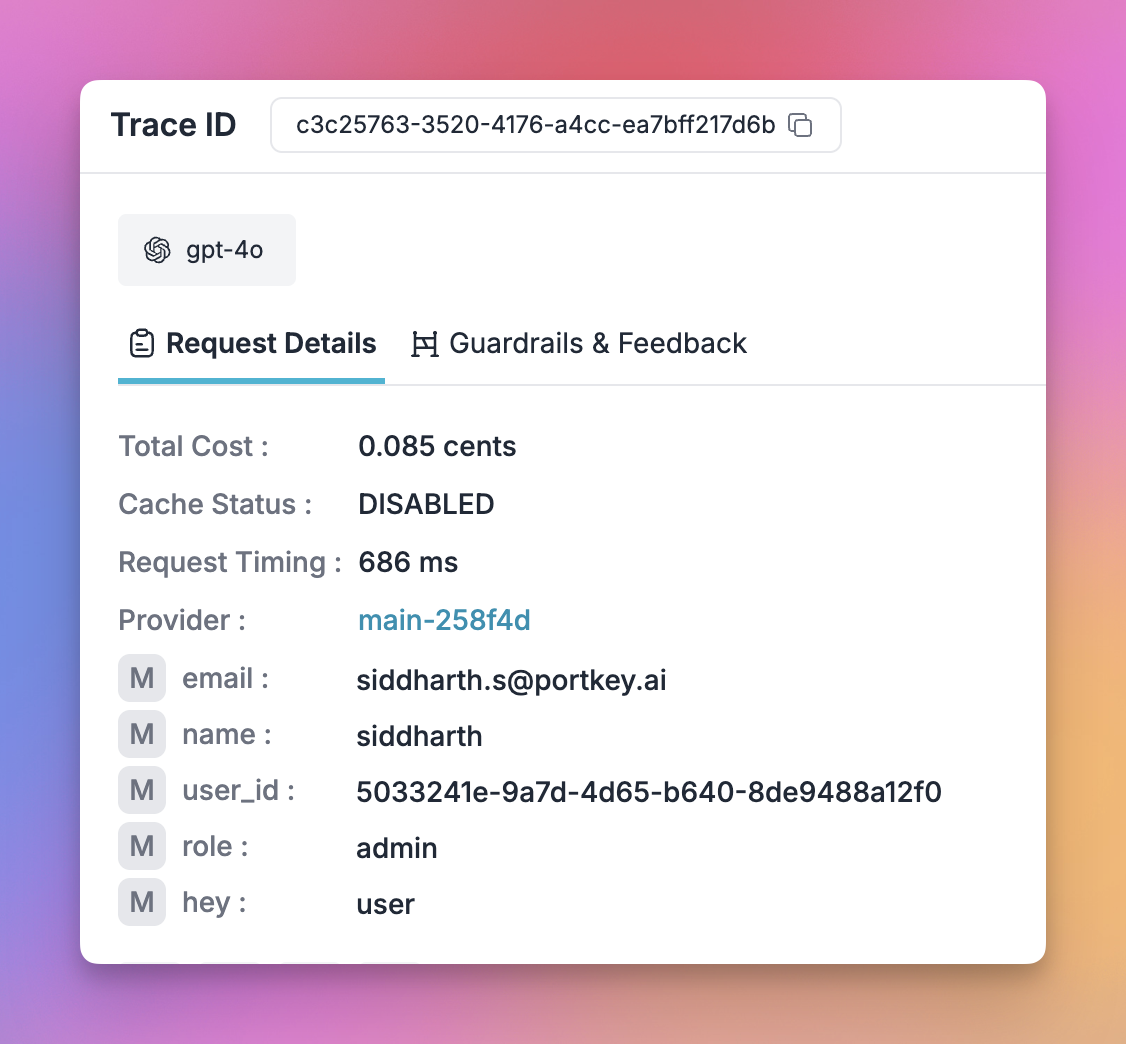

Add Your Provider

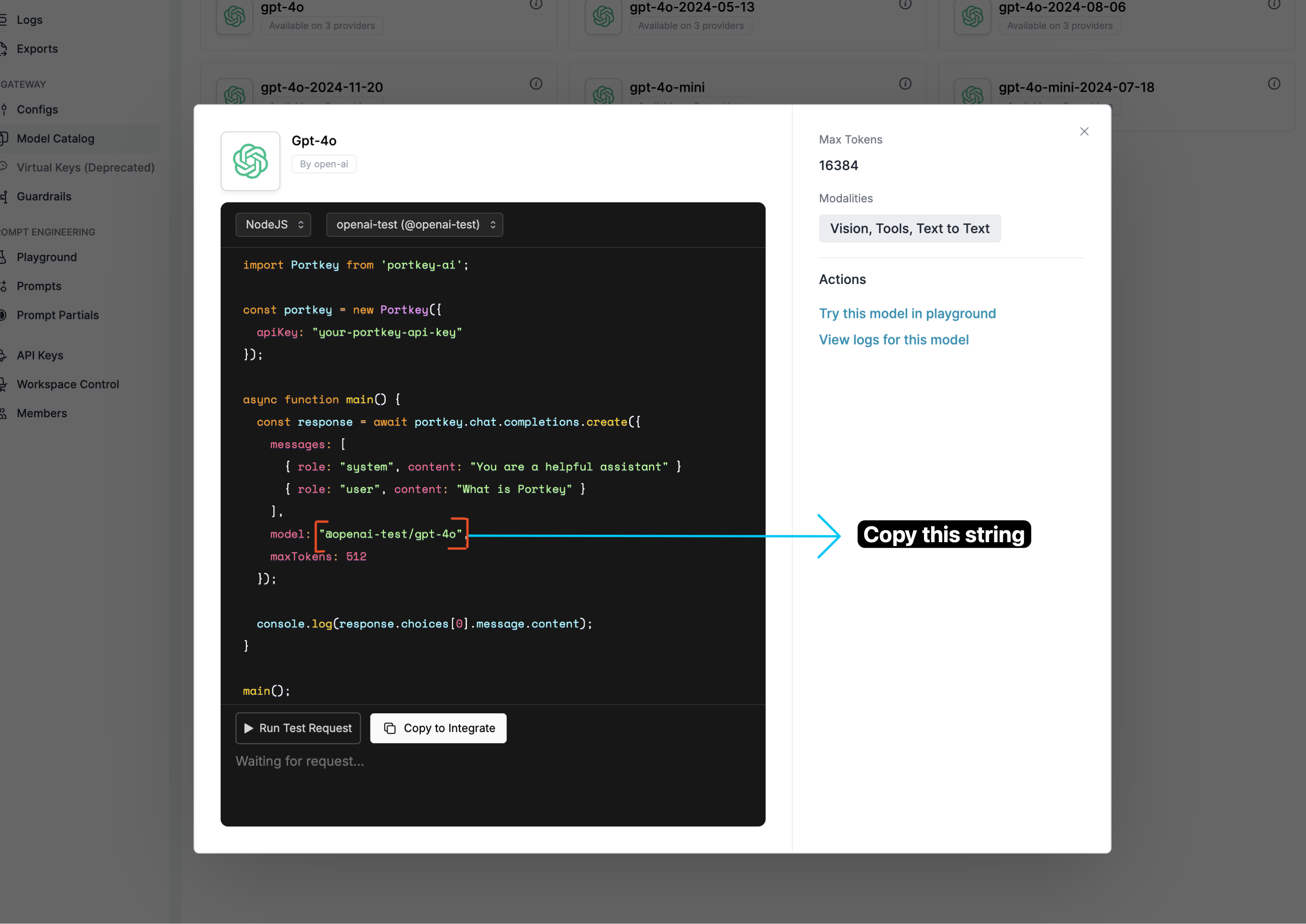

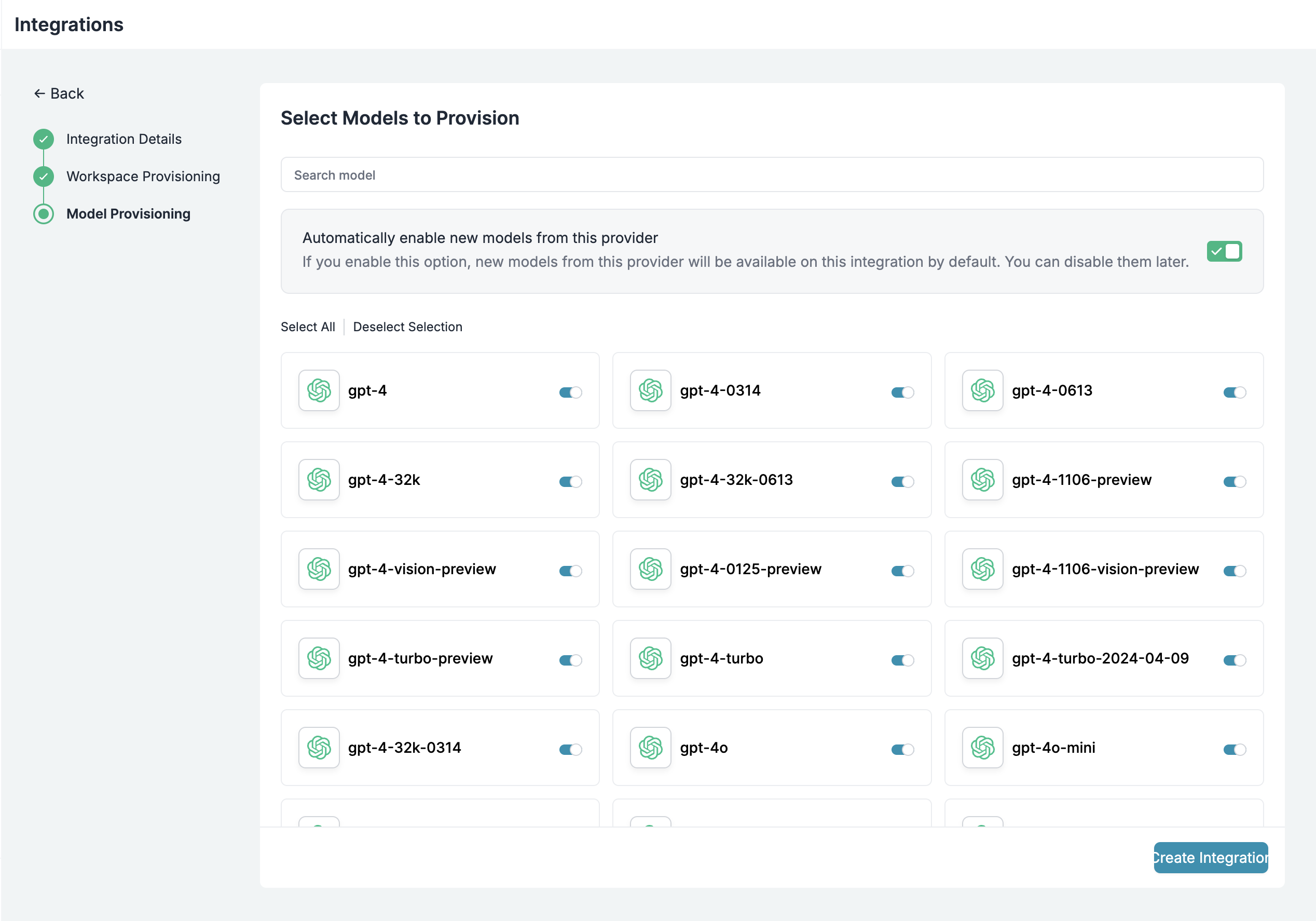

- Navigate to Model Catalog → AI Providers.

- Click Create Provider (if this is your first time using Portkey).

- Select Create New Integration → choose your AI service (OpenAI, Anthropic, etc.).

- Enter your provider’s API key and required details.

- (Optional) Configure workspace and model provisioning.

- Click Create Integration.

2. Connect Open WebUI to Portkey

Choose the option that best fits your needs.Option A: Direct OpenAI-Compatible Connection

This route keeps your setup lightweight by pointing Open WebUI at Portkey’s OpenAI-compatible endpoint.You’ll need the API key and model slugs collected in Step 1.

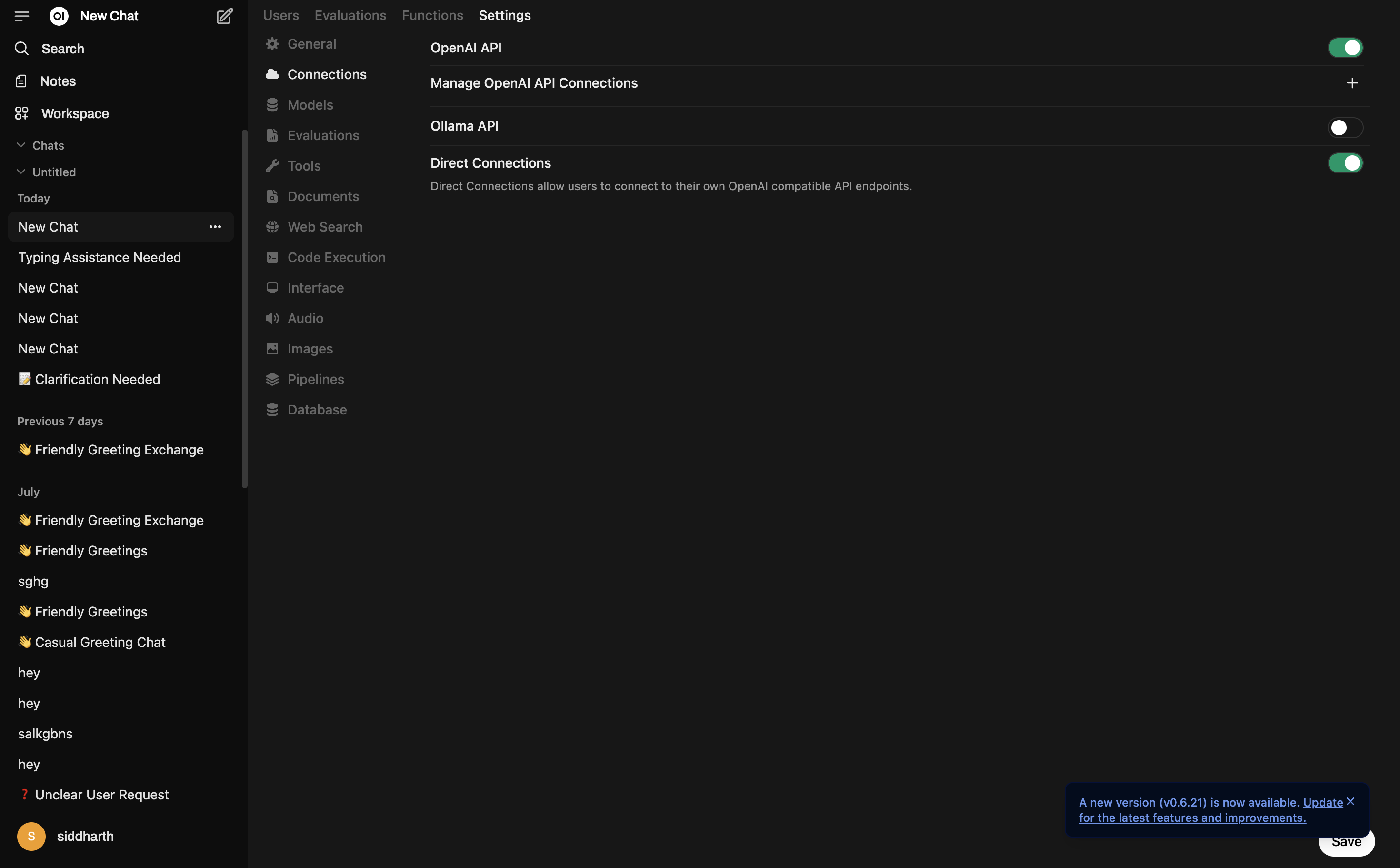

Access Admin Panel

- Start your Open WebUI server.

- Click your username at the bottom left.

- Open the Admin Panel → Settings tab → select Connections from the sidebar.

Enable Direct Connections

- Turn on Direct Connections and the OpenAI API toggle.

- Click the + icon next to Manage OpenAI API Connections.

Configure Portkey Connection

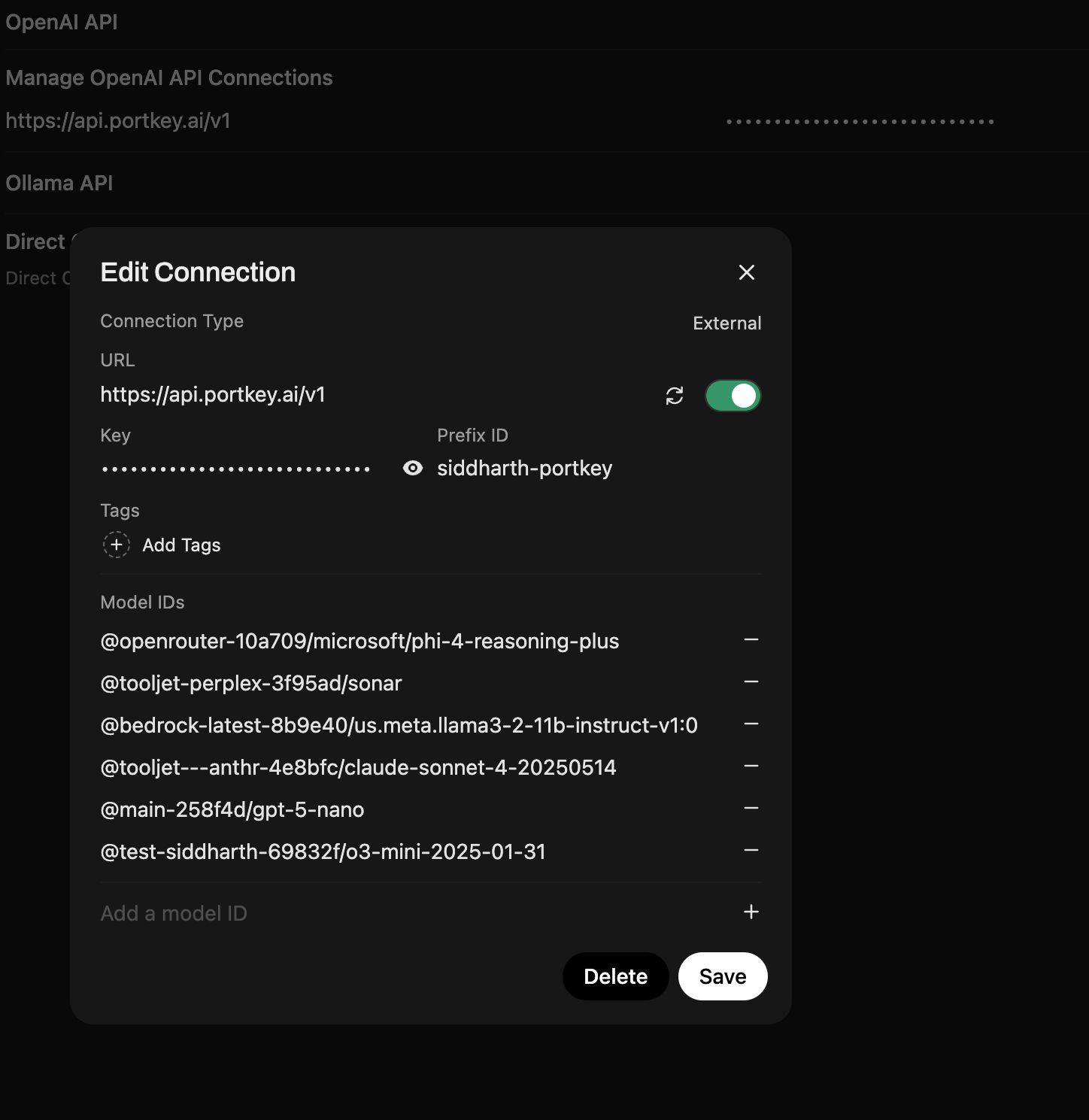

Fill in the Edit Connection dialog:

- URL:

https://api.portkey.ai/v1 - Key: Your Portkey API key (with a default config attached, if needed)

- Prefix ID:

portkey(or a label you prefer) - Model IDs: Slugs such as

@openai/gpt-4oor@anthropic/claude-3-sonnet

Tip: You can leave the Model IDs field empty during “Edit Connection” and Open WebUI will automatically fetch all available model slugs from your Portkey Model Catalog.

Using Portkey Configs: Since Open WebUI doesn’t support custom headers, attach a default config to your API key in Portkey to use features like fallbacks, caching, or routing. See Default Configs documentation for setup instructions.

Option B: Portkey Manifold Pipe (Recommended for Enterprise)

The official Portkey Manifold Pipe unlocks enterprise-grade observability and governance features that aren’t available through the basic OpenAI-compatible connection:In typical Open WebUI deployments, a single shared API key means you lose visibility into which user made which request. Portkey logs show anonymous traffic instead of per-user attribution. The Portkey Manifold Pipe solves this by automatically forwarding Open WebUI user context to Portkey, enabling true per-user observability, cost tracking, and governance—even with a shared API key setup.

Why Use the Manifold Pipe?

Per-User Attribution

Automatically track which Open WebUI user made each request—essential for enterprise deployments with shared API keys.

Structured Metadata

Forward Open WebUI user context (email, name, role, chat ID) as structured metadata for filtering and analytics.

Auto Model Discovery

Automatically populate Open WebUI’s model dropdown from your Portkey Model Catalog—no manual configuration.

Enhanced Resilience

Built-in retry logic with exponential backoff for non-streaming requests ensures reliable performance.

- Download: portkey_manifold_pipe.py

- Best for: Enterprise deployments with shared infrastructure where user-level tracking, governance, and automated model management are critical.

portkey_manifold_pipe.py

portkey_manifold_pipe.py

portkey_manifold_pipe.py

Install the Pipe

- Open your Open WebUI Workspace.

- Click the user icon in the bottom-left corner.

- Select Admin Panel.

- In the Admin Panel, go to the Functions tab.

- Click the + button to create a new function.

- Copy the function code provided in the accordion above and paste it into the editor.

- Set the function name to Portkey Function and add a description.

- Click Save to finish.

Configure Valves

- In Open WebUI, choose the

PORTKEYpipe from the dropdown. - Open the Valves panel and configure:

- PORTKEY_API_KEY: Your Portkey API key (required)

- PORTKEY_API_BASE_URL: Default

https://api.portkey.ai/v1(change only for self-hosted)

- AUTO_DISCOVER_MODELS: Enable to automatically fetch available models from Portkey’s catalog (recommended)

- PORTKEY_MODELS: Comma-separated model slugs for manual curation. Example:

@openai-provider/gpt-4o, @anthropic-provider-slug/claude-sonnet-latest

Enterprise Tip: The pipe automatically forwards Open WebUI user metadata (name, email, role, chat_id) to Portkey via the

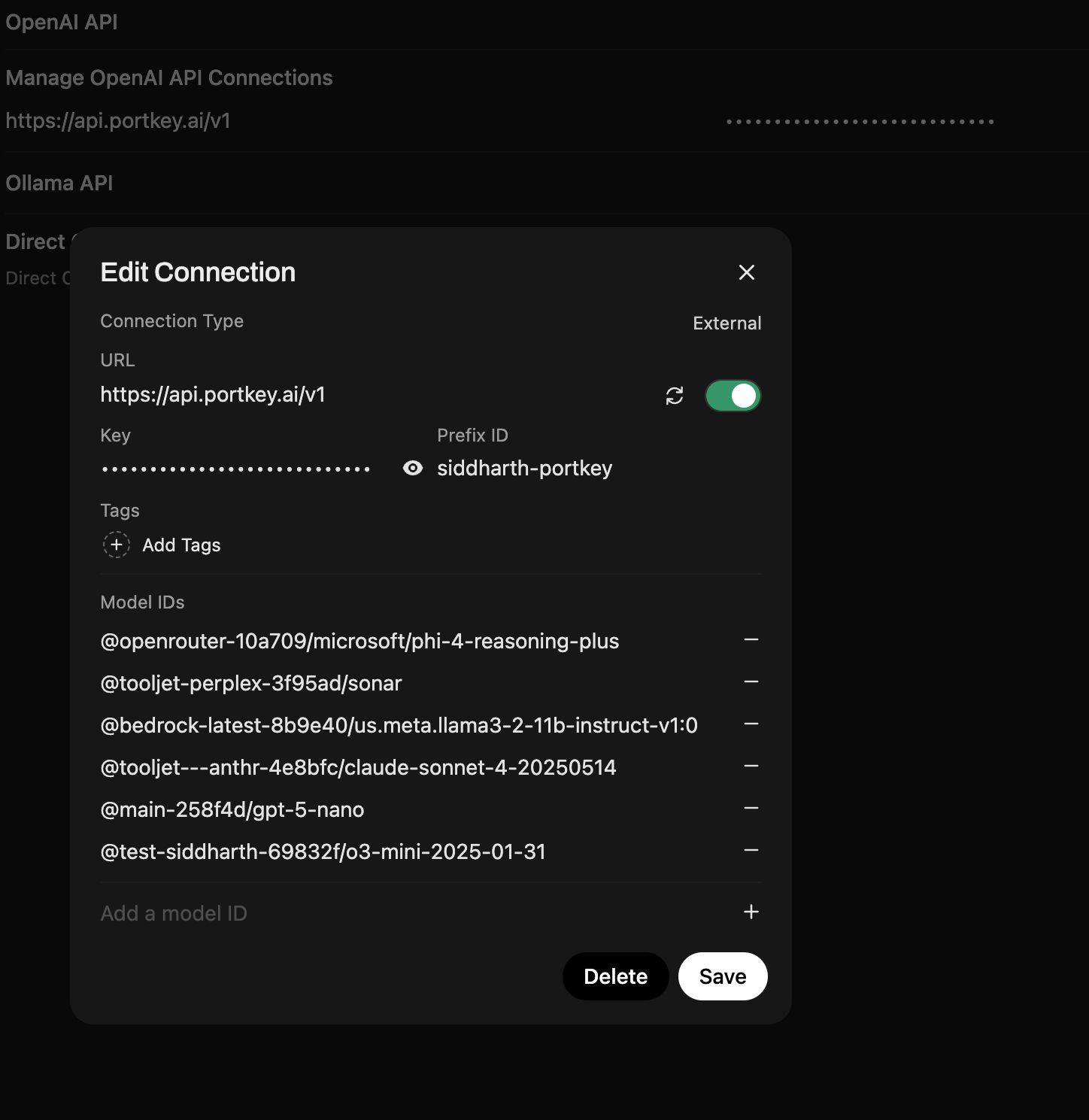

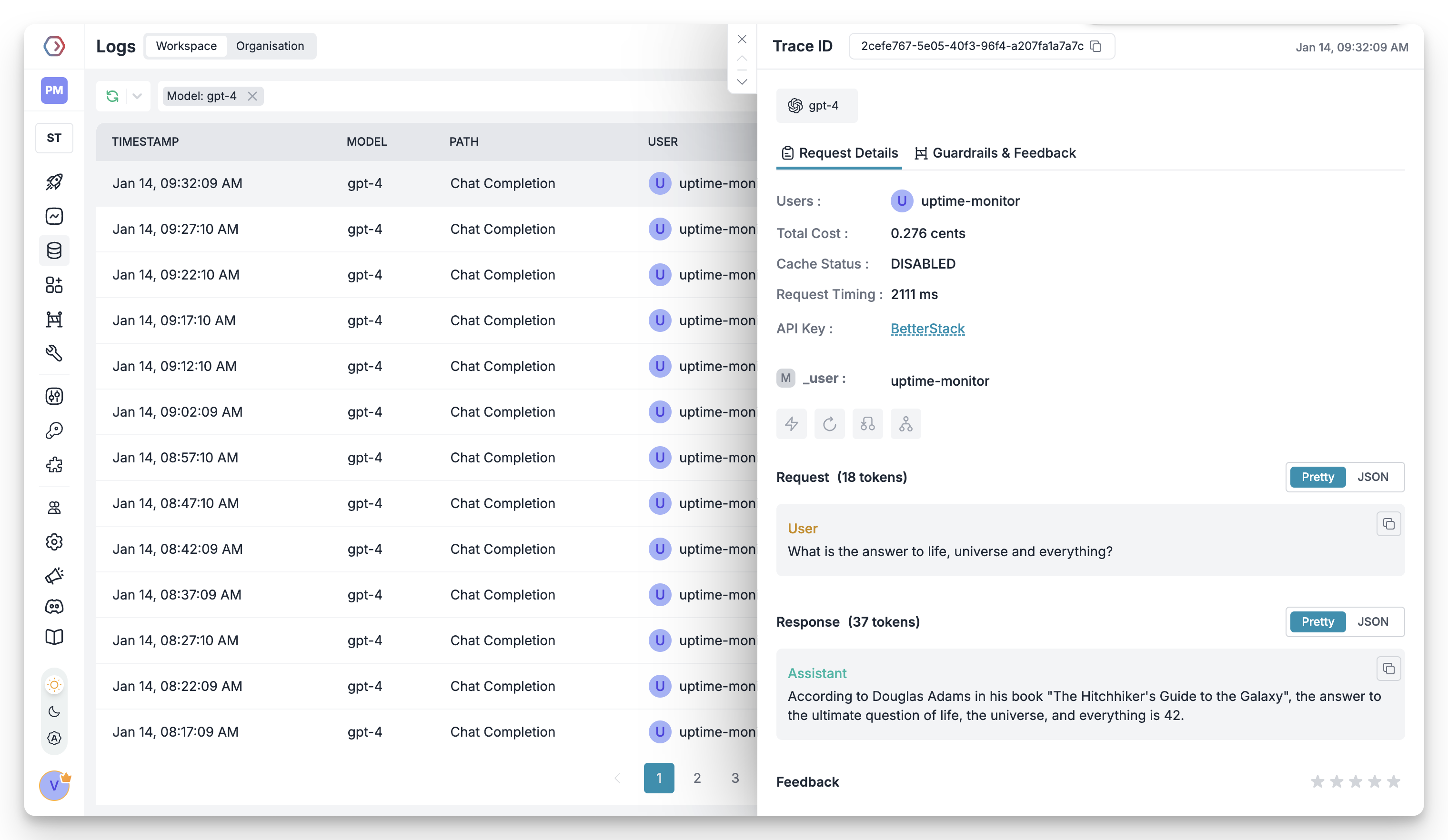

x-portkey-metadata header. This solves the shared API key attribution problem—you’ll see per-user details in Portkey logs even when all users share the same API key.Verify User Attribution

- Start chatting in Open WebUI with the PORTKEY pipe selected.

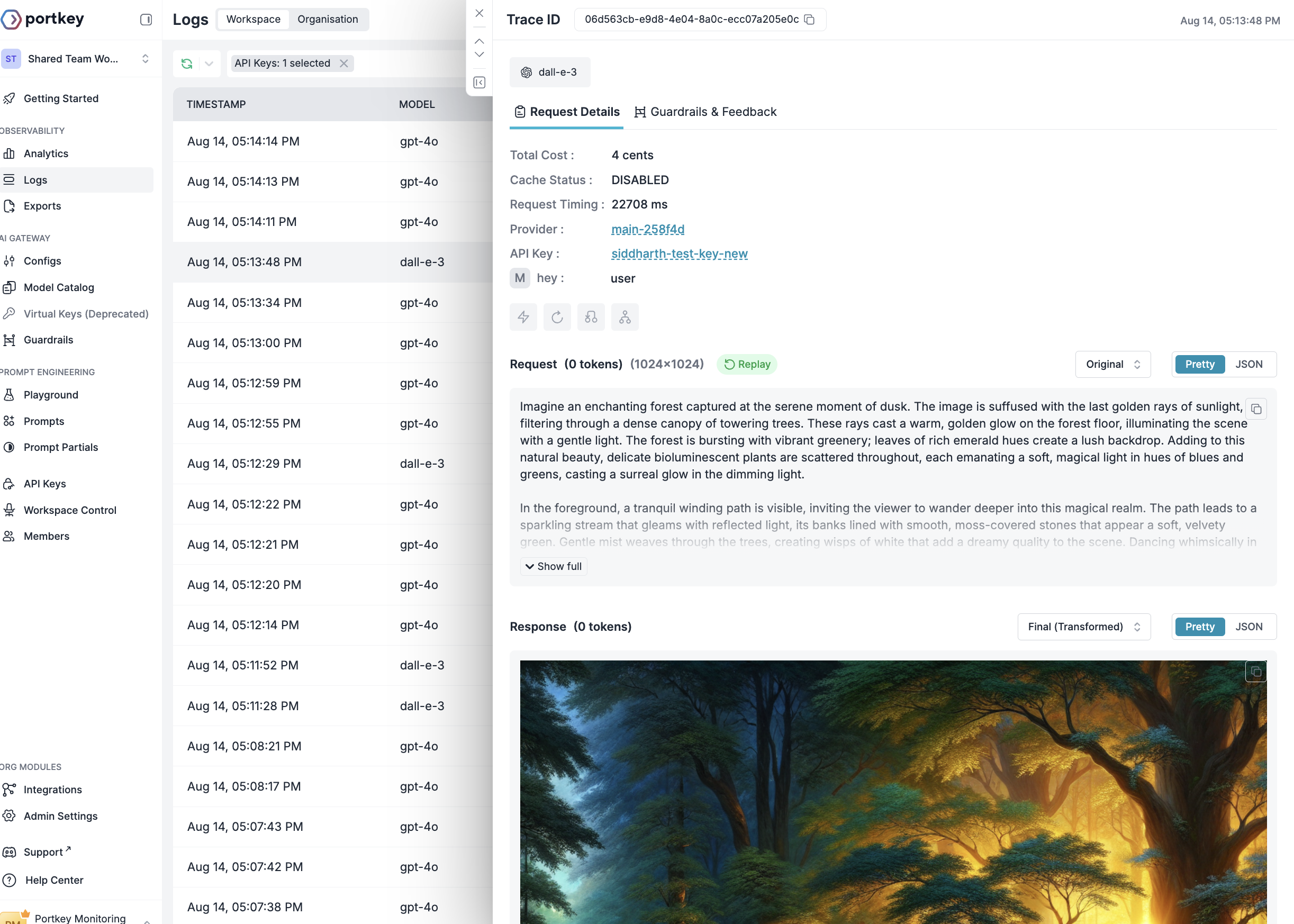

- Open the Portkey Logs dashboard.

- You should now see detailed user attribution in your logs:

- User email, name, and role visible in request metadata

- Filter logs by specific users or teams

- Track costs and usage per team member

Success! You’ve solved the shared API key problem. Every request now shows which Open WebUI user originated it, enabling true enterprise observability.

Apply Additional Governance (Optional)

- Reference Configs at the API key level to enable routing, fallbacks, caching, or segmentation. Note: Since Open WebUI doesn’t support custom headers, you must use default configs attached to your API key.

- Set up budget limits and rate controls through Portkey’s Model Catalog.

- All governance policies automatically apply across Open WebUI users via the shared setup.

What You’ll See in Portkey

Once configured, the manifold pipe automatically captures and displays Open WebUI user context in every request. User emails appear directly in the User column of your logs list view—no need to click into individual entries.

- User email – Appears in the User column and metadata (via the special

_userfield) - User name – Full name from Open WebUI user profile

- User role – Admin, user, or custom roles for access control

- Chat ID – Track conversations and session context

- User ID – Unique identifier for programmatic filtering

How the Manifold Pipe Solves Enterprise Attribution

The manifold pipe bridges Open WebUI’s user context with Portkey’s observability, solving a critical enterprise pain point:- Without Manifold Pipe

- With Manifold Pipe

- Cost attribution per user or department

- Usage analytics segmented by team member

- Compliance & audit trails showing who accessed sensitive models

- Governance policies based on user roles and permissions

The pipe leverages Open WebUI’s

__user__ context object, which contains user details passed from the frontend. This data is automatically formatted as Portkey metadata and attached to every request.3. Set Up Enterprise Governance for Open WebUI

Why Enterprise Governance? If you are using Open WebUI inside your organization, consider the following governance pillars:- Cost Management: Controlling and tracking AI spending across teams

- Access Control: Managing which teams can access specific models

- Usage Analytics: Understanding how AI is being used across the organization

- Security & Compliance: Maintaining enterprise security standards

- Reliability: Ensuring consistent service across all users

- Model Management: Governing how models are provisioned and updated

Step 1: Implement Budget Controls & Rate Limits

Step 1: Implement Budget Controls & Rate Limits

Step 1: Implement Budget Controls & Rate Limits

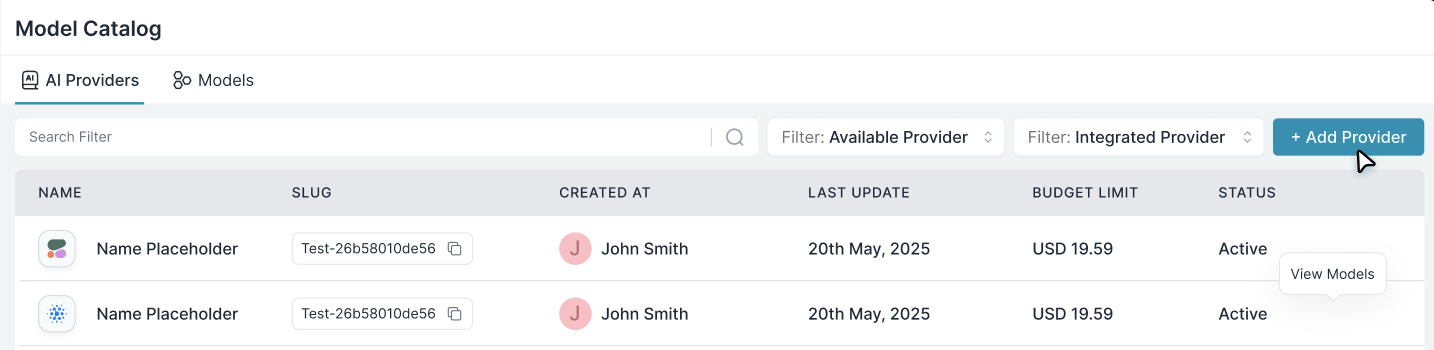

Model Catalog enables you to have granular control over LLM access at the team/department level. This helps you:- Set up budget & rate limits

- Prevent unexpected usage spikes using Rate limits

- Track departmental spending

Setting Up Department-Specific Controls:

- Navigate to Model Catalog in Portkey dashboard

- Create new Provider for each engineering team with budget limits and rate limits

- Configure department-specific limits

Step 2: Define Model Access Rules

Step 2: Define Model Access Rules

Step 2: Define Model Access Rules

Use the Model Catalog to provision which models are exposed to each integration or workspace.

Step 3: Set Routing Configuration

Step 3: Set Routing Configuration

Portkey Configs control routing logic, fallbacks, caching, and data protection.Create or edit configs in the Configs Library. Since Open WebUI doesn’t support custom headers, attach default configs to your API keys rather than referencing them in requests.

Configs can be updated anytime to adjust policies without redeploying Open WebUI.

Step 4: Implement Access Controls

Step 4: Implement Access Controls

Create user- or team-specific API keys that automatically:

- Track usage with metadata

- Apply the right configs and access policies

- Enforce scoped permissions

Step 5: Deploy & Monitor

Step 5: Deploy & Monitor

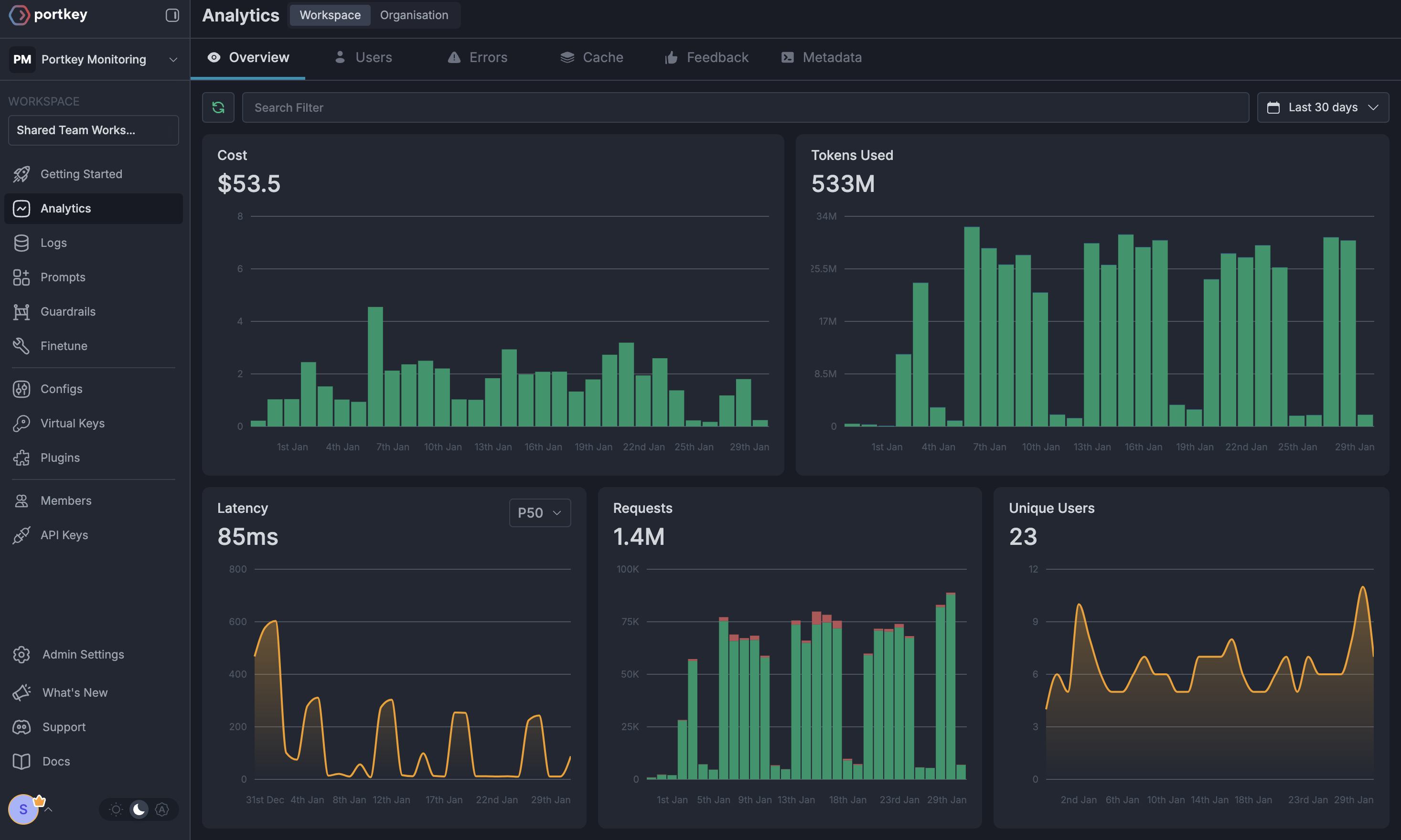

Distribute API keys, apply your governance defaults, and monitor live usage inside the Portkey dashboard:

- Cost tracking by department

- Model usage patterns

- Request volumes and error rates

- Audit-ready metadata

Enterprise Features Now Available

Open WebUI now has:- Per-developer budget controls

- Model access governance

- Usage tracking & attribution

- Code security guardrails

- Reliability features for development workflows

4. Image Generation with Portkey

Portkey enables seamless image generation through Open WebUI by providing a unified interface for various image generation models like DALL-E 2, DALL-E 3, and other compatible models. This integration allows you to leverage Portkey’s enterprise features including cost tracking, access controls, and observability for all your image generation needs.Setting Up Image Generation

Before proceeding, ensure you have completed the workspace setup in Step 1 and have your Portkey API key ready. Image generation works with either integration path above.

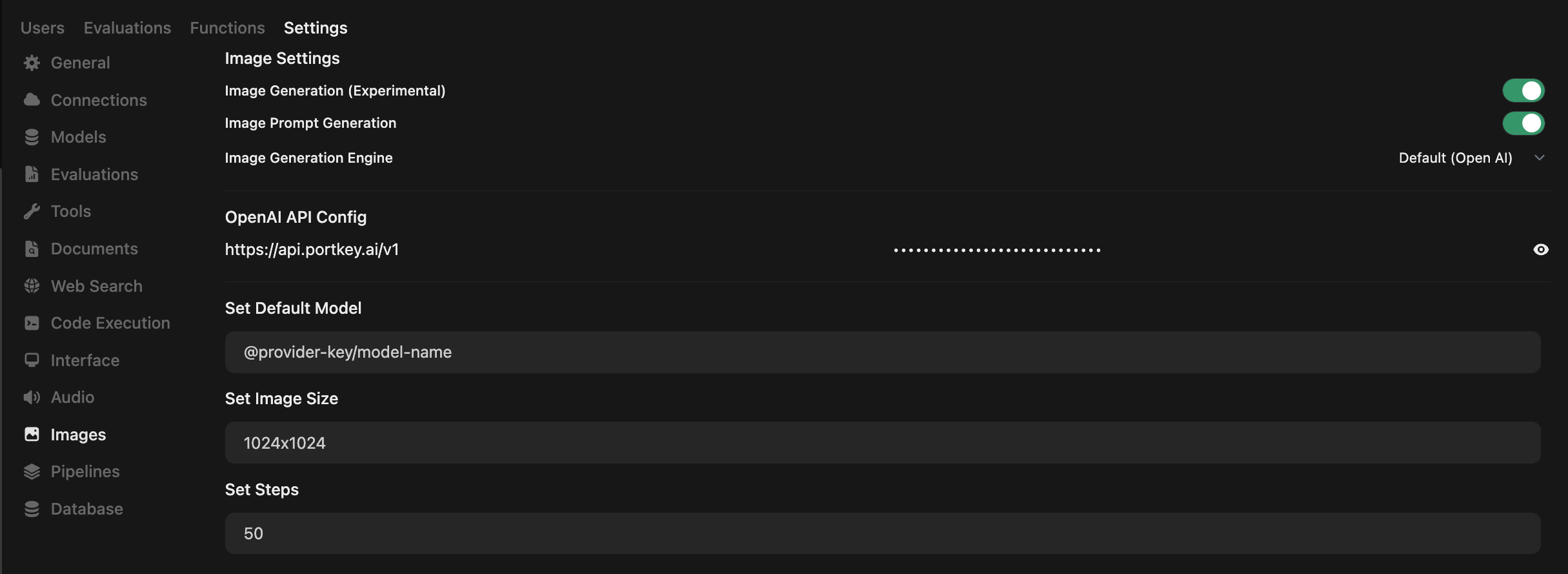

Access Image Settings

- Navigate to your Open WebUI Admin Panel

- Go to Settings → Images from the sidebar

Configure Image Generation Engine

In the Image Settings page, configure the following:

- Enable Image Generation: Toggle ON the Image Generation (Experimental) option

- Image Generation Engine: Select Default (Open AI) from the dropdown

- OpenAI API Config: Enter Portkey’s base URL:

- API Key: Enter your Portkey API key (from Step 1)

- Set Default Model: Enter your model slug in the format:

For example:

@openai-test/dall-e-3

Configure Model-Specific Settings

Choose the model you wish to use. Note that image size options will depend on the selected model:

- DALL·E 2: Supports 256x256, 512x512, or 1024x1024 images.

- DALL·E 3: Supports 1024x1024, 1792x1024, or 1024x1792 images.

- GPT-Image-1: Supports auto, 1024x1024, 1536x1024, or 1024x1536 images.

- Other Models: Check your provider’s documentation (Gemini, Vertex, and others) for supported sizes.

Test Your Configuration

- Return to the main chat interface

- Type a prompt and click the image generation icon

- Your image will be generated using Portkey’s infrastructure

- Track usage and costs in the Portkey Dashboard

Monitoring Image Generation

All image generation requests through Portkey are automatically tracked with:- Cost Attribution: See exact costs per image generation

- Request Logs: Full prompt and response tracking

- Performance Metrics: Generation time and success rates

- Metadata Tags: Track image generation by team/department

Pro Tip: If you are using a different AI provider (Gemini, Vertex AI, etc.) and need additional parameters for image generation, add them to a Portkey Config as

override_params and attach it to your API key. Here’s a guide.Portkey Features

Now that you have an enterprise-grade Open WebUI setup, explore the comprehensive features Portkey provides to ensure secure, efficient, and cost-effective AI operations.1. Comprehensive Metrics

Using Portkey you can track 40+ key metrics including cost, token usage, response time, and performance across all your LLM providers in real time. You can also filter these metrics based on custom metadata that you can set in your configs. Learn more about metadata here.

2. Advanced Logs

Portkey’s logging dashboard provides detailed logs for every request made to your LLMs. These logs include:- Complete request and response tracking

- Metadata tags for filtering

- Cost attribution and much more…

3. Unified Access to 1600+ LLMs

You can easily switch between 1600+ LLMs. Call various LLMs such as Anthropic, Gemini, Mistral, Azure OpenAI, Google Vertex AI, AWS Bedrock, and many more by simply changing themodel slug in your default config object.

4. Advanced Metadata Tracking

Using Portkey, you can add custom metadata to your LLM requests for detailed tracking and analytics. Use metadata tags to filter logs, track usage, and attribute costs across departments and teams.Custom Metadata

5. Enterprise Access Management

Budget Controls

Set and manage spending limits across teams and departments. Control costs with granular budget limits and usage tracking.

Single Sign-On (SSO)

Enterprise-grade SSO integration with support for SAML 2.0, Okta, Azure AD,

and custom providers for secure authentication.

Organization Management

Hierarchical organization structure with workspaces, teams, and role-based

access control for enterprise-scale deployments.

Access Rules & Audit Logs

Comprehensive access control rules and detailed audit logging for security compliance and usage tracking.

6. Reliability Features

Fallbacks

Automatically switch to backup targets if the primary target fails.

Conditional Routing

Route requests to different targets based on specified conditions.

Load Balancing

Distribute requests across multiple targets based on defined weights.

Caching

Enable caching of responses to improve performance and reduce costs.

Smart Retries

Automatic retry handling with exponential backoff for failed requests

Budget Limits

Set and manage budget limits across teams and departments. Control costs

with granular budget limits and usage tracking.

7. Advanced Guardrails

Protect your Project’s data and enhance reliability with real-time checks on LLM inputs and outputs. Leverage guardrails to:- Prevent sensitive data leaks

- Enforce compliance with organizational policies

- PII detection and masking

- Content filtering

- Custom security rules

- Data compliance checks

Guardrails

Implement real-time protection for your LLM interactions with automatic

detection and filtering of sensitive content, PII, and custom security rules.

Enable comprehensive data protection while maintaining compliance with

organizational policies.

FAQs

Can I use multiple LLM providers with the same API key?

Can I use multiple LLM providers with the same API key?

Yes! You can create multiple

Integrations (one for each provider)

and attach them to a single config. This config can then be connected to

your API key, allowing you to use multiple providers through a single API

key.

How do I track costs for different teams?

How do I track costs for different teams?

Portkey provides several ways to track team costs: - Create separate

Integrations for each team - Use metadata tags in your configs - Set up

team-specific API keys - Monitor usage in the analytics dashboard

What happens if a team exceeds their budget limit?

What happens if a team exceeds their budget limit?

When a team reaches their budget limit: 1. Further requests will be blocked

2. Team admins receive notifications 3. Usage statistics remain available in

dashboard 4. Limits can be adjusted if needed

Next Steps

Join our CommunityFor enterprise support and custom features, contact our enterprise

team.